AI in portfolio management platforms: why 80% of AI initiatives never reach production and what the 20% do differently

Last Updated on: May 13, 2026

Key Takeaways

I. The production failure rate your investment committee is not being told

II. The three failure modes consuming financial services AI budgets

III. What the 20% do differently

IV. Where AI Workbench delivers inside portfolio management

V. Expected outcomes – portfolio AI that reaches production

VI. Why the production failure rate will get worse

VII. Three decisions before your next AI initiative

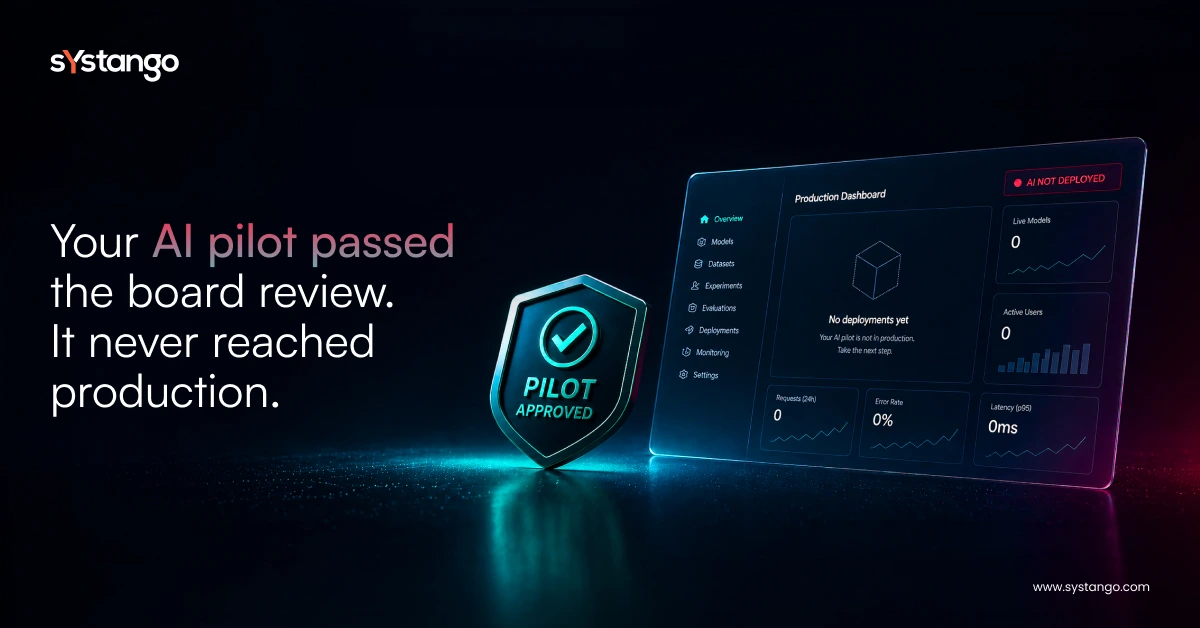

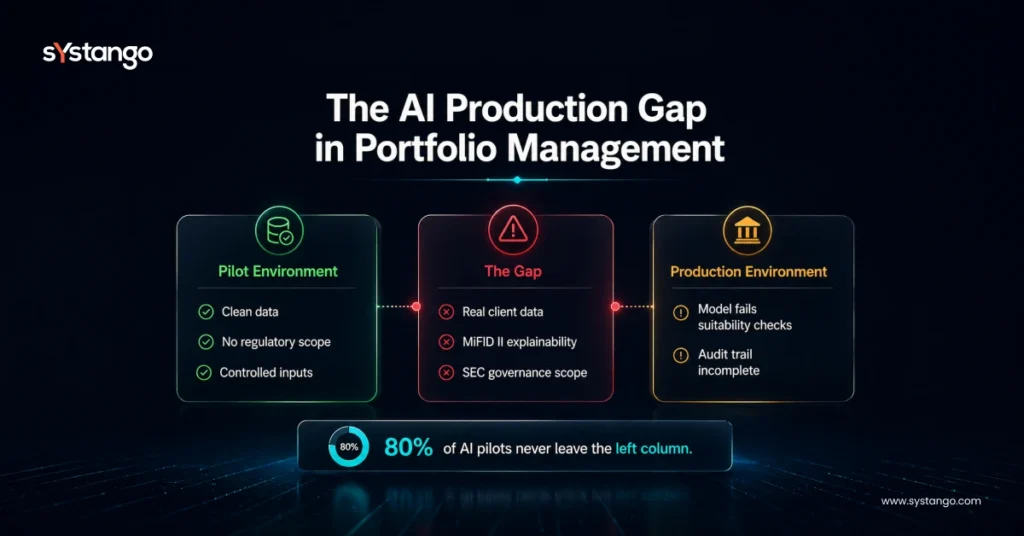

Here is the statistic your AI steering committee needs to see: 80% of AI projects in financial services never reach production, according to FinTellect AI research published with the World Economic Forum. Not those that fail to deliver value – 80% that never leave the pilot stage. The AI in portfolio management platform your team spent six months building is more likely shelved than shipped.

If your engineering team has a graveyard of AI proof of concept not scaling to production, the problem is not your data scientists, your models, or your budget. It is structural – and it is the same structural problem in 80% of organisations that share your outcome.

I. The production failure rate your investment committee is not being told

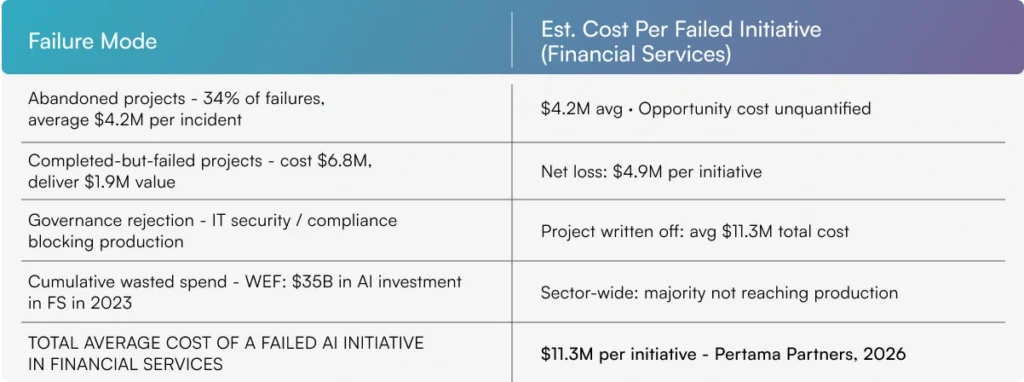

FinTellect AI’s research, published with the World Economic Forum, found 80% of AI projects in financial services fail to reach production; of those that do, 70% deliver no measurable value. Pertama Partners’ 2026 analysis puts financial services at an 82.1% AI failure rate – the highest of any sector.

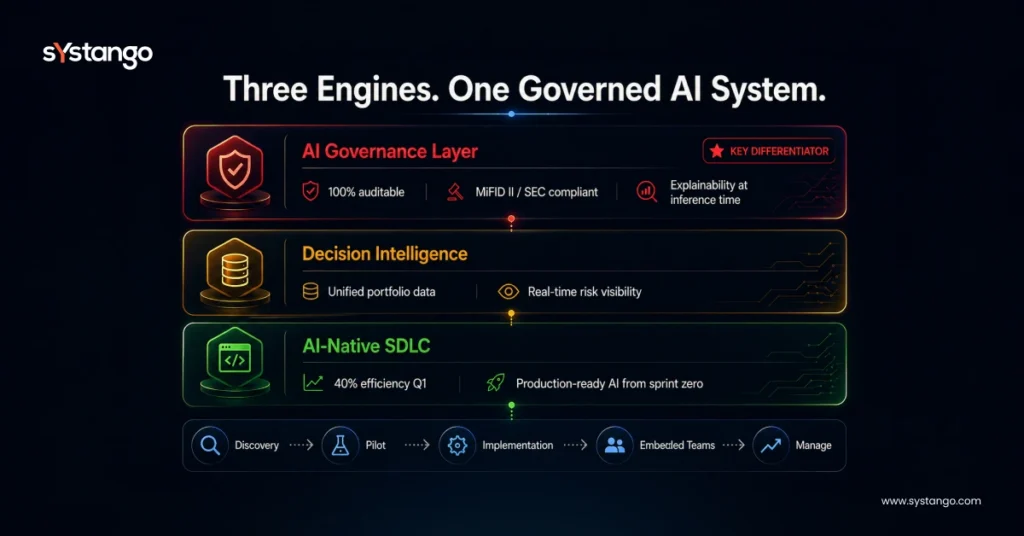

The SEC’s 2025 examination priorities include AI in portfolio management; ESMA’s MiFID II guidance requires explainability and suitability alignment at inference. An AI model that cannot produce compliant documentation in production is not production-ready.

II. The three failure modes consuming financial services AI budgets

Failure mode 1 – The data governance gap: production data is nothing like pilot data

AI pilots run on clean, curated data. Production runs on real client data – incomplete, inconsistent, and subject to privacy obligations. Informatica’s CDO Insights survey found 43% of firms cite data quality as their primary AI obstacle. The model worked in the pilot. The production data broke it.

Failure mode 2 – The compliance gate: AI that cannot explain itself cannot be deployed

ESMA’s MiFID II guidance requires AI in portfolio management to be explainable and suitability-aligned. An AI proof of concept not scaling to production almost always carries an unresolved compliance gap: the model cannot produce the documentation regulators require. This is a governance-first AI layer in the SDLC absence.

Failure mode 3 – The infrastructure mismatch: enterprise scale exposes what the pilot concealed

AI pilot to production challenges in financial services are predominantly infrastructure challenges – latency, data pipeline reliability, IT security requirements, failover behaviour. The AI model is frequently fine. The production infrastructure it needs does not yet exist.

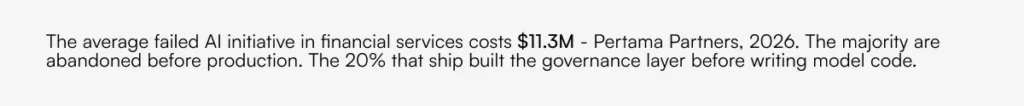

III. What the 20% do differently

1. Build production requirements into pilot design

The 20% that ship define data governance, compliance documentation, and explainability standards before the pilot. Deloitte’s Financial AI Adoption Report found 60%+ of FS firms cite compliance requirements discovered after completion as the primary cause of AI delays.

2. Instrument the model for regulatory scrutiny from sprint zero

MiFID II and the SEC’s 2025 AI governance expectations require explainability documentation generated at inference time, audit trails written at the model layer, and bias testing embedded in the CI/CD pipeline. The 20% treat these as engineering deliverables. The compliance team does not discover the gap after the model is built. They sign off on the governance framework before the model runs.

IV. Where AI Workbench delivers inside portfolio management

V. Expected outcomes – portfolio AI that reaches production

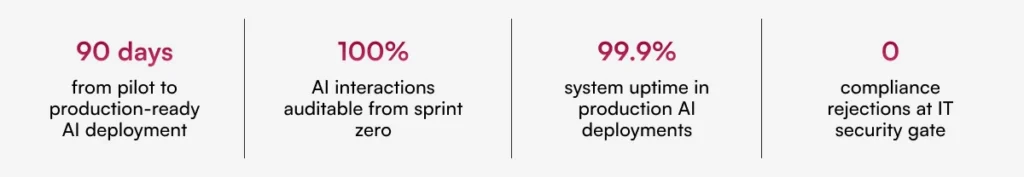

Documented delivery results from live AI Workbench engagements – not projections.

VI. Why the production failure rate will get worse

S&P Global found 42% of companies abandoned most AI initiatives in 2025, up from 17% in 2024. As AI investment in financial services grows, the first-mover advantage is not in AI adoption. It is in AI production delivery.

Two forces increasing the production failure risk in 2026

1. Regulatory tightening – The SEC’s 2025 AI examination priorities, ESMA’s MiFID II guidance, and the FCA’s Consumer Duty are all moving toward more rigorous AI governance. Every initiative started without a governance layer is more likely to fail the 2026 compliance gate than the 2024 one.

2. AI washing consequences – In 2024, the SEC penalised two advisory firms for making misleading claims about their AI capabilities. The cost of failed AI production deployments is no longer hypothetical. Governance is not optional. It is a condition of deployment.

VII. Three decisions before your next AI initiative

1. Define production gate criteria before the pilot: data governance, compliance documentation, IT security, and explainability. If you cannot define these before the pilot, you will discover them after it ends.

2. Assign compliance and IT security owners as design partners from sprint one. MIT Sloan’s research found pilot success is not a reliable predictor of production success. Embedded governance requirements are.

3. Audit your last AI pilots: what caused each not to reach production? Each root cause maps to a governance capability to build in before the next one starts. The AI Readiness Checklist structures this in 15 minutes.

About Systango

Systango is a publicly listed AI-native digital engineering company. We build governance-first AI systems for regulated FinTech, WealthTech, and InsurTech organisations in the UK and US – from funded startups to enterprises including Google and Cisco. AI-native by design, governance-first by principle, outcome-accountable by default.